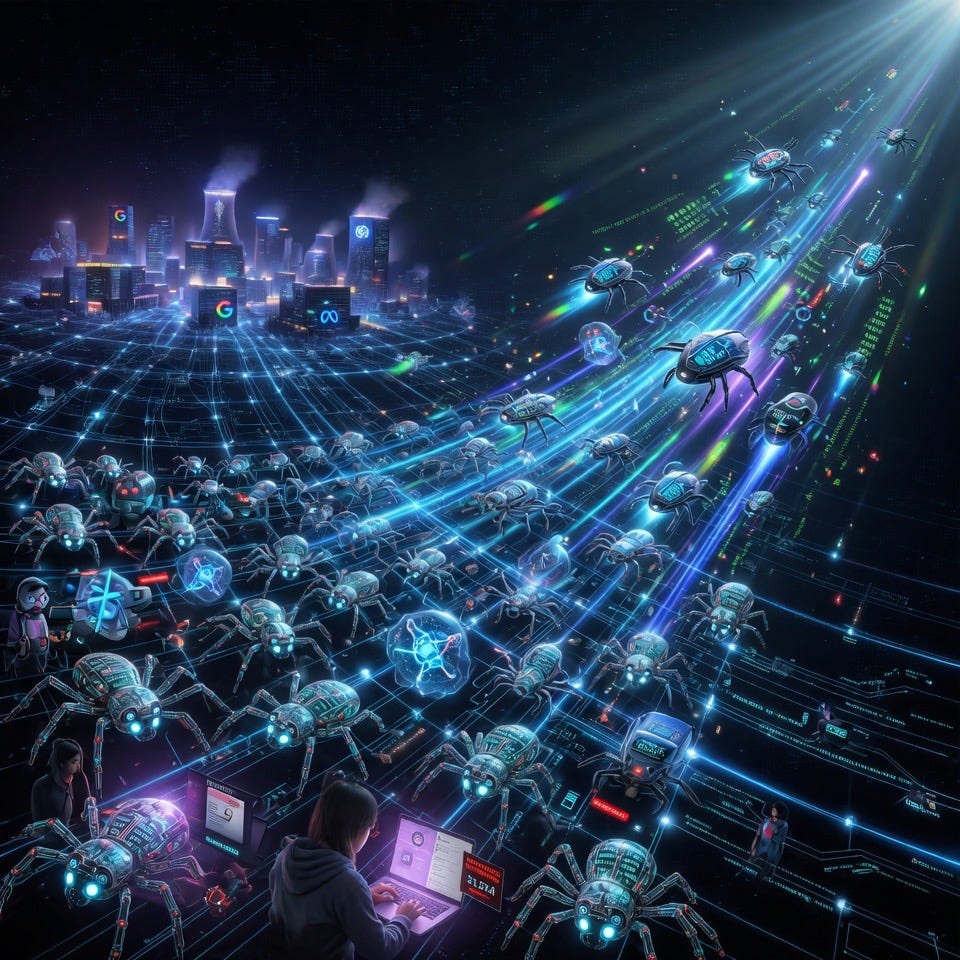

The Bot Takeover: Over Half of Global Internet Traffic Is Now Generated by AI – Where It Comes From, Who’s Behind It, Why It’s Exploding, and What It Means

In April 2026, Lumen Technologies CEO Kate Johnson delivered a wake-up call that cut through the noise of daily tech headlines. From her company’s vantage point observing roughly 65 percent of worldwide internet traffic, Johnson reported that more than 50 percent of all planetary data flows now originate from AI bots and autonomous systems rather than human beings. These are not the crude spam scripts or basic scrapers of earlier decades. They are sophisticated, continuously operating “autonomous workers” that crawl, query, generate, and interact at scales and speeds once unimaginable.

This shift marks a profound reorientation of the internet: from a network built primarily for human-to-human exchange to a machine-to-machine ecosystem. Independent reports released around the same period reinforce the picture. Imperva’s 2025 Bad Bot Report documented automated traffic surpassing human activity for the first time in a decade, hitting 51 percent of global web traffic in 2024 and continuing upward. HUMAN Security’s 2026 State of AI Traffic & Cyberthreat Benchmark Report, analyzing over one quadrillion interactions, recorded AI-driven traffic growing 187 percent year-over-year in 2025, with agentic (autonomous acting) AI surging an extraordinary 7,851 percent. The data is consistent across backbone providers, cloud observatories, and security platforms: the internet’s machine layer has overtaken its human one.

Understanding this transformation requires looking at the geography of the traffic, the handful of organizations driving it, the economic and technical reasons behind the surge, and the practical trade-offs now facing publishers, businesses, infrastructure operators, and everyday users.

Where the Traffic Originates

The bulk of bot traffic flows from data centers and cloud infrastructure concentrated in the United States, which accounts for the lion’s share of origins according to multiple network telemetry sources. Major platforms such as Amazon Web Services and Google Cloud alone generate a significant portion of verified AI activity. Because Lumen’s backbone measurements capture the full spectrum—HTTP requests, APIs, data transfers, and machine-to-machine communications—the 50 percent-plus figure reflects total automated flows rather than just visible web-page loads.

Web-focused metrics tell a complementary story. Cloudflare’s 2025 Year in Review noted that, in datasets limited to HTML page requests, dedicated AI crawlers (excluding legacy search bots like Googlebot) averaged around 4.2 percent, while Googlebot itself hovered near 4.5 percent. When broader automated activity is included—training crawlers, real-time scrapers, legacy good bots, and malicious actors—the overall picture aligns with Lumen’s backbone view: machines now dominate. Concentration is highest in high-value verticals: retail and e-commerce, streaming and media, and travel and hospitality absorb over 95 percent of AI-driven traffic, where structured data on prices, inventory, content, and user behavior delivers the greatest commercial return.

Who Is Driving the Traffic

A small number of major technology companies account for the overwhelming majority of AI bot volume, creating a striking concentration of influence.

According to HUMAN Security’s 2026 analysis, OpenAI leads decisively with approximately 69 percent of observed AI bot traffic. Its suite includes GPTBot (for bulk training data collection), ChatGPT-User (real-time fetches triggered by user queries), OAI-SearchBot, and emerging agentic tools. Meta follows at roughly 16 percent through Meta-ExternalAgent, which supports Llama model development and AI features across its family of platforms. Anthropic contributes about 11 percent via ClaudeBot and related crawlers. The remaining share is split among Google (whose Googlebot serves dual purposes of search indexing and AI training), Microsoft’s Bingbot, Amazonbot, and smaller entrants such as Bytespider (ByteDance), PerplexityBot, and Applebot.

Cloudflare’s crawler rankings from mid-2025 to mid-2026 show similar patterns, with GPTBot surging dramatically in share while Googlebot remains a heavyweight due to its long-established role. This operator-level concentration means that policy decisions at just a few headquarters—on crawling rates, robots.txt compliance, data usage, and rate limiting—shape the load experienced by millions of websites worldwide.

Why Bots Operate at This Scale

The explosion is fueled by the insatiable appetite of modern AI systems for fresh, high-quality data. Traffic breaks down into three primary categories that have evolved rapidly since the launch of ChatGPT in late 2022:

1. Model Training Crawlers (still the dominant share, historically 67–90 percent of AI bot volume). These systematically scan public web pages to gather training data for large language models. The goal is scale: billions of pages to improve accuracy, breadth of knowledge, and overall capabilities. Early 2025 saw training crawlers comprise up to 90 percent of AI traffic; even as diversification occurred, volumes grew 136 percent year-over-year.

2. Real-Time Scrapers and Retrieval Tools (now roughly 25–32 percent and growing fastest in some segments). These fetch live data to power retrieval-augmented generation (RAG) features. When a user asks an AI assistant about current events, stock prices, product availability, or local inventory, an agent-triggered scraper pulls fresh information rather than relying solely on static training data. This category expanded over 15 times in 2025 according to some measurements.

3. Agentic AI and Autonomous Agents (still a smaller base at around 1.7 percent by late 2025 but exploding 7,851 percent year-over-year). These bots do not merely read—they act. They navigate sites, compare options, fill forms, and in some cases complete transactions on behalf of users. The line between helpful automation and potential fraud vectors is narrowing; detection differences between benign and malicious agentic activity can be as slim as half a percentage point.

Legacy “good bots” (search indexing, uptime checks) and outright malicious actors (ad fraud, credential stuffing, price scraping, DDoS preparation) continue alongside the AI surge. Pre-2022, bots typically represented around 20 percent of traffic, driven mostly by Google’s crawler. Generative AI flipped the equation by making large-scale data collection cheaper, faster, and more automated than ever.

Implications: Opportunities, Frictions, and the Road Ahead

The bot-dominated internet carries clear benefits and equally clear costs that cut across innovation, economics, security, and the open web itself.

On the positive side, the surge accelerates AI capabilities that deliver better search, real-time assistance, personalized tools, and automation across industries. Productivity gains, more relevant results, and new services become possible precisely because these systems consume and synthesize vast amounts of public data. Infrastructure providers argue that programmable, consumption-based networks will rise to meet the demand, spurring innovation in networking, cybersecurity, and dynamic scaling.

Yet the frictions are substantial. Content creators, publishers, and smaller sites absorb much of the load: server costs rise, bandwidth bills climb, analytics become distorted by inflated or synthetic activity, and referral traffic sometimes declines as AI summarizes information without sending users onward. Many operators have responded by tightening robots.txt rules or implementing rate limits; millions of sites now block major AI crawlers outright. Energy consumption in data centers increases alongside traffic volumes. Security teams face blurred lines between legitimate automation and malicious activity, complicating defense while opening new surfaces for fraud—agent-driven account takeovers, synthetic commerce, or coordinated manipulation.

Economically, this represents a large-scale transfer: publicly created web content increasingly trains private AI models, often without direct compensation to original creators. Ongoing debates over copyright, fair use, and data licensing reflect the tension. Some frame it as “data colonialism” by dominant tech platforms; others view